Beyond the Hype Cycle: What a Year of Global Conversations Taught Me About AI

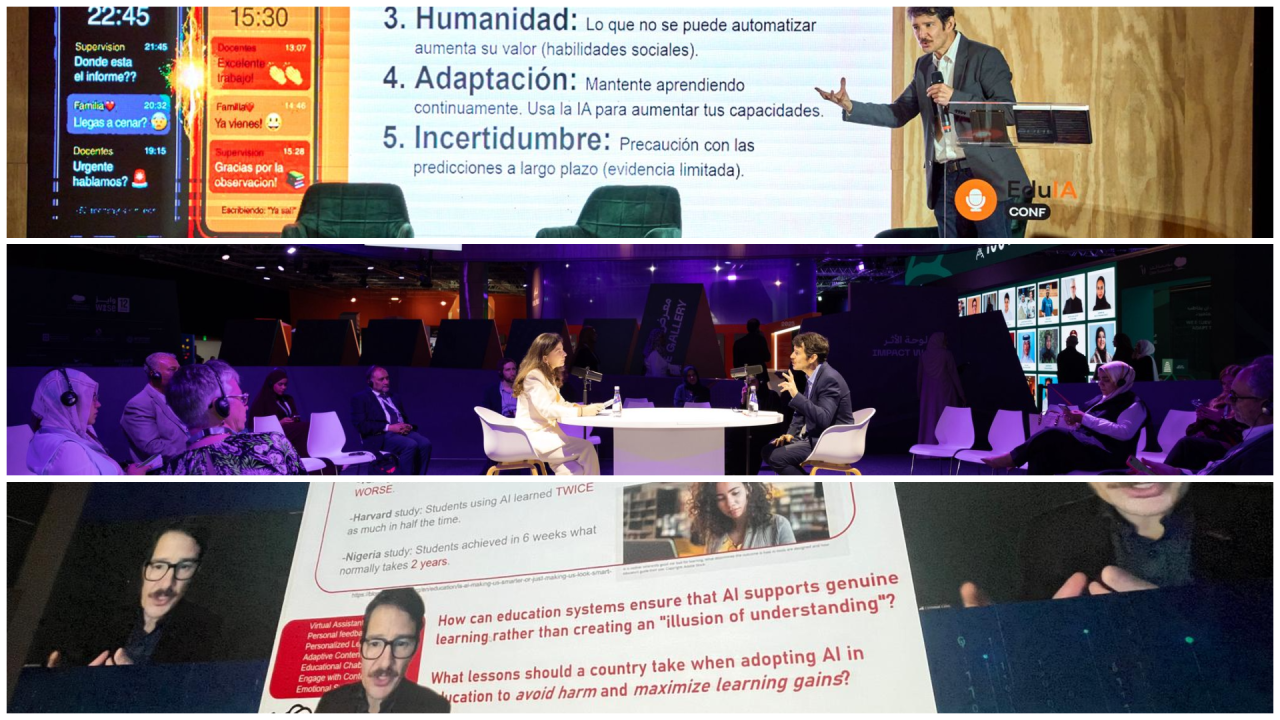

As the year comes to an end, 2025 has been unusually busy, with chances to engage policymakers, scholars, and experts across the Americas, Europe, Asia, and the Middle East, including representatives from more than two dozen countries. Across these exchanges, one pattern repeated: AI is seen as accelerating fast, and there is strong pressure to “catch up”—sometimes driven by evidence, but mostly by the AI lead rhetoric. A clear lesson is that engagement with industry cannot be a provider–consumer model. It must demand shared responsibility for outcomes, real transparency, and a better balance between profit and human-centered wellbeing, backed by stronger public capacity—skills, infrastructure, standards, oversight, and public–private coordination—to apply AI responsibly and at scale. To close the year, here are the main takeaways and lessons from these conversations.

- Uncertainty and novelty bias are shrinking attention spans and depth. The inability to track constant change fuels FOMO and weakens sustained thinking. Novelty becomes the signal, and anything not new is treated as irrelevant.

- Fear of obsolescence is the dominant emotional driver, even more than job loss. Displacement concerns exist, but disruption has not arrived at the predicted speed. Still, fear of being left behind is shaping choices and urgency.

- These technologies are already changing how people think, work, and produce knowledge. They influence how information is created and how tasks are organized. They also shape what people notice, value, and prioritize.

- Efficiency is winning over quality, and automation over augmentation. The goal is often faster and cheaper delivery of the same outputs. Too little attention goes to doing things better or redesigning models and purposes.

- The debate remains techno-centric, and human perspectives are missing. If AI is for people, humanities and social sciences must be present, not as an afterthought. A more democratic discussion needs more than technical voices.

- Tools are prioritized over systems, delaying institutional change. Adoption moves faster than governance, regulation, and capacity-building. Public institutions and the private sector need new capabilities, not just new products.

- Power is concentrated, and participation is uneven. A few AI hubs set the agenda while most places stay in adoption mode. This concentrates voice and influence, and leaves out people with less technical literacy or outside major cities.

[cross post]